Introduction

This contest will focus on trying to recognize different highly similar hand motions from a professional potter. Participants will try to recognize the hand motions from coordinate data of both potters hands. This track is made to see if hand recognition systems can recognize similar hand motions of two hands. These motions are all recorded using a Vicon system and pre-processed into a coordinate system using Blender and Vicon shogun Post.Objective

The objective of this track is to evaluate the performance of different hand recognition algorithms using a new dataset of highly similar hand motions of a potter creating a vase.Task and evaluation

Participants should develop methods that can detect and classify hand movements based on the given skeletal coordinate system in the test set. The result should consist of a single text file with a row representing the number of motion in the test dataset followed by the motion class combined with their algorithms and information on how to run them for verification purposes. For the evaluation we will be looking at the accuracy per class as well as the total accuracy over the entire test set. We will create a confusion matrix to draw conclusions on the methods.Results submission

the submission should include 4 parts:

the submission should include 4 parts:- A small readme file file with a minor description on how to run your code / use of parameters. (This is so we can run the code ourselves on a different test set to verify the results and prevent unfair behavior).

-

Result file consisting of a single text file with a row representing the number of motion (alphabetically ascending order in the test dataset) followed by the predicted motion class.

In this case {number_of_motion} (space) {motion_class} {new line}, resulting in the example on the right (incorrect motion classes are given in the example). - Executable code

- Method description of 1-2 pages in Latex. Containing the Important implementation details of the classification system using the C&G LaTex template found here under section: 3. The main text.

Dataset

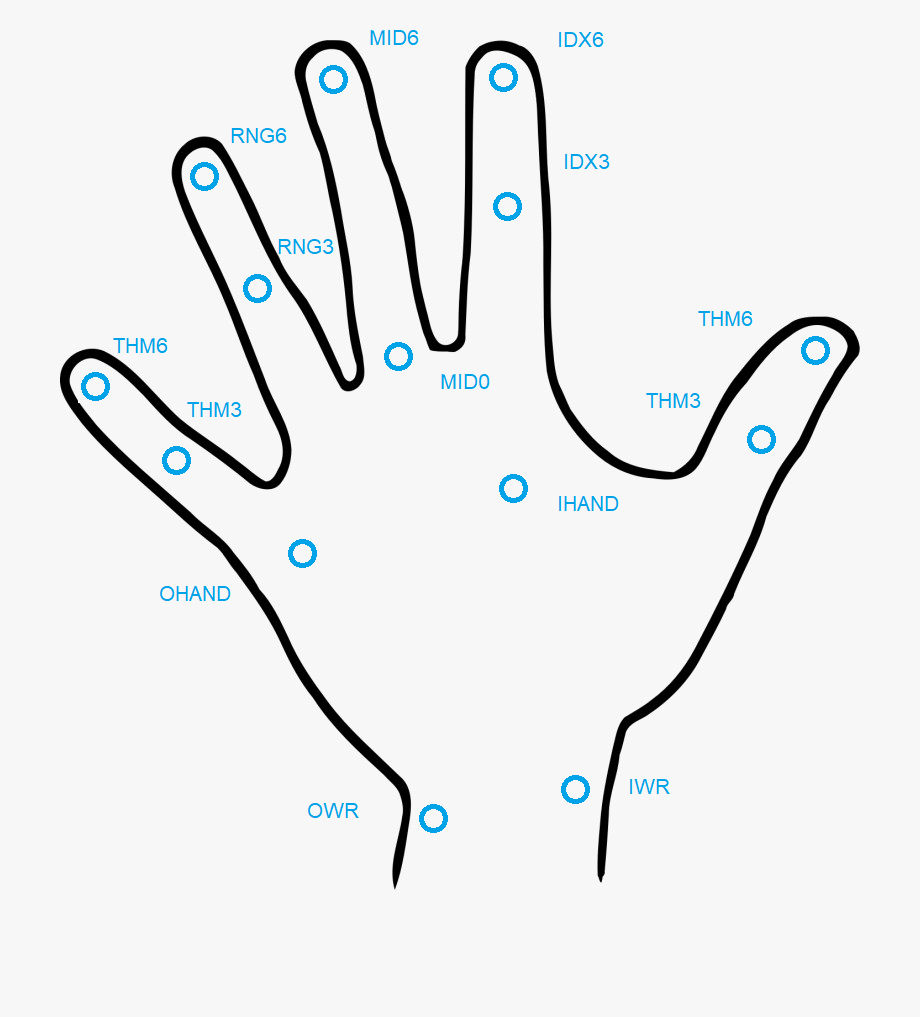

We recorded hand motions of an experienced potter who sculpted the same pot with and without clay. We captured our data using a Vicon System containing 14 Vantage Cameras that will track reflective markers on the subject's hands. The motions are processed to be saved as a text file where each row represents the data of a specific frame with 28 coordinate floats (14 per hand see picture below) (x;y;z positions) of the markers. The dataset is split into a training and testing set (70/30).

The structure of the coordinate system is as followed:

Frame; LIWR(x;y;z); LOWR(x;y;z); LIHAND(x;y;z); LOHAND(x;y;z); LTHM3(x;y;z); LTHM6(x;y;z); LIDX3(x;y;z); LIDX6(x;y;z); LMID0(x;y;z); LMID6(x;y;z); LRNG3(x;y;z); LRNG6(x;y;z); LPNK3(x;y;z); LPNK6(x;y;z); RIWR(x;y;z); ROWR(x;y;z); RIHAND(x;y;z); ROHAND(x;y;z); RTHM3(x;y;z); RTHM6(x;y;z); RIDX3(x;y;z); RIDX6(x;y;z); RMID0(x;y;z); RMID6(x;y;z); RRNG3(x;y;z); RRNG6(x;y;z); RPNK3(x;y;z); RPNK6(x;y;z);

The motion classes used in this task are:

- Pressing the clay to make it stick to the pottery wheel.

- Making a hole in the clay.

- Tightening the cylinder of the clay.

- Centering the clay.

- Raising the base structure of the clay

- Smoothing the walls

- Using the sponge to make the clay more moist.

Data split (Train/Test) for the data split in a train and test set.

Important dates

The following list is a step-by-step description of the activities:- February 16 - Data made available.

- March 8 - Registration deadline.

- March 29 - Submission deadline of the results. Results are submitted along with a description of the method(s) used to generate the results.

- April 12 - Track report submission to Computers & Graphics for review.

- May 3 - First review done, first stage decision on acceptance or rejection.

- May 31 - First revision due.

- June 24 - Second stage of reviews complete, decision on acceptance, rejection, or acceptance as short paper.

- July 4 - Final version submission.

- July 7 - Final decision on acceptance or rejection.

- August - Publication online in Computers & Graphics journal.

- August 26-27 - Presentation at the Eurographics 2024 Symposium on 3D Object Retrieval